2024 New Professional-Machine-Learning-Engineer Exam Dumps with PDF and VCE Free: https://www.2passeasy.com/dumps/Professional-Machine-Learning-Engineer/

It is more faster and easier to pass the Google Professional-Machine-Learning-Engineer exam by using Printable Google Google Professional Machine Learning Engineer questuins and answers. Immediate access to the Improve Professional-Machine-Learning-Engineer Exam and find the same core area Professional-Machine-Learning-Engineer questions with professionally verified answers, then PASS your exam with a high score now.

Online Professional-Machine-Learning-Engineer free questions and answers of New Version:

NEW QUESTION 1

You are responsible for building a unified analytics environment across a variety of on-premises data marts. Your company is experiencing data quality and security challenges when integrating data across the servers, caused by the use of a wide range of disconnected tools and temporary solutions. You need a fully managed, cloud-native data integration service that will lower the total cost of work and reduce repetitive work. Some members on your team prefer a codeless interface for building Extract, Transform, Load (ETL) process. Which service should you use?

- A. Dataflow

- B. Dataprep

- C. Apache Flink

- D. Cloud Data Fusion

Answer: D

NEW QUESTION 2

You have a demand forecasting pipeline in production that uses Dataflow to preprocess raw data prior to model training and prediction. During preprocessing, you employ Z-score normalization on data stored in BigQuery and write it back to BigQuery. New training data is added every week. You want to make the process more efficient by minimizing computation time and manual intervention. What should you do?

- A. Normalize the data using Google Kubernetes Engine

- B. Translate the normalization algorithm into SQL for use with BigQuery

- C. Use the normalizer_fn argument in TensorFlow's Feature Column API

- D. Normalize the data with Apache Spark using the Dataproc connector for BigQuery

Answer: B

NEW QUESTION 3

You are building a real-time prediction engine that streams files which may contain Personally Identifiable Information (Pll) to Google Cloud. You want to use the Cloud Data Loss Prevention (DLP) API to scan the files. How should you ensure that the Pll is not accessible by unauthorized individuals?

- A. Stream all files to Google CloudT and then write the data to BigQuery Periodically conduct a bulk scan of the table using the DLP API.

- B. Stream all files to Google Cloud, and write batches of the data to BigQuery While the data is being written to BigQuery conduct a bulk scan of the data using the DLP API.

- C. Create two buckets of data Sensitive and Non-sensitive Write all data to the Non-sensitive bucket Periodically conduct a bulk scan of that bucket using the DLP API, and move the sensitive data to the Sensitive bucket

- D. Create three buckets of data: Quarantine, Sensitive, and Non-sensitive Write all data to the Quarantine bucket.

- E. Periodically conduct a bulk scan of that bucket using the DLP API, and move the data to either the Sensitive or Non-Sensitive bucket

Answer: A

NEW QUESTION 4

You are developing ML models with Al Platform for image segmentation on CT scans. You frequently update your model architectures based on the newest available research papers, and have to rerun training on the same dataset to benchmark their performance. You want to minimize computation costs and manual intervention while having version control for your code. What should you do?

- A. Use Cloud Functions to identify changes to your code in Cloud Storage and trigger a retraining job

- B. Use the gcloud command-line tool to submit training jobs on Al Platform when you update your code

- C. Use Cloud Build linked with Cloud Source Repositories to trigger retraining when new code is pushed to the repository

- D. Create an automated workflow in Cloud Composer that runs daily and looks for changes in code in Cloud Storage using a sensor.

Answer: A

NEW QUESTION 5

You are building a linear regression model on BigQuery ML to predict a customer's likelihood of purchasing your company's products. Your model uses a city name variable as a key predictive component. In order to train and serve the model, your data must be organized in columns. You want to prepare your data using the least amount of coding while maintaining the predictable variables. What should you do?

- A. Create a new view with BigQuery that does not include a column with city information

- B. Use Dataprep to transform the state column using a one-hot encoding method, and make each city a column with binary values.

- C. Use Cloud Data Fusion to assign each city to a region labeled as 1, 2, 3, 4, or 5r and then use that number to represent the city in the model.

- D. Use TensorFlow to create a categorical variable with a vocabulary list Create the vocabulary file, and upload it as part of your model to BigQuery ML.

Answer: C

NEW QUESTION 6

Your data science team needs to rapidly experiment with various features, model architectures, and hyperparameters. They need to track the accuracy metrics for various experiments and use an API to query the metrics over time. What should they use to track and report their experiments while minimizing manual effort?

- A. Use Kubeflow Pipelines to execute the experiments Export the metrics file, and query the results using the Kubeflow Pipelines API.

- B. Use Al Platform Training to execute the experiments Write the accuracy metrics to BigQuery, and query the results using the BigQueryAPI.

- C. Use Al Platform Training to execute the experiments Write the accuracy metrics to Cloud Monitoring, and query the results using the Monitoring API.

- D. Use Al Platform Notebooks to execute the experiment

- E. Collect the results in a shared Google Sheetsfile, and query the results using the Google Sheets API

Answer: A

NEW QUESTION 7

During batch training of a neural network, you notice that there is an oscillation in the loss. How should you adjust your model to ensure that it converges?

- A. Increase the size of the training batch

- B. Decrease the size of the training batch

- C. Increase the learning rate hyperparameter

- D. Decrease the learning rate hyperparameter

Answer: C

NEW QUESTION 8

You recently joined a machine learning team that will soon release a new project. As a lead on the project, you are asked to determine the production readiness of the ML components. The team has already tested features and data, model development, and infrastructure. Which additional readiness check should you recommend to the team?

- A. Ensure that training is reproducible

- B. Ensure that all hyperparameters are tuned

- C. Ensure that model performance is monitored

- D. Ensure that feature expectations are captured in the schema

Answer: B

NEW QUESTION 9

You have a functioning end-to-end ML pipeline that involves tuning the hyperparameters of your ML model using Al Platform, and then using the best-tuned parameters for training. Hypertuning is taking longer than expected and is delaying the downstream processes. You want to speed up the tuning job without significantly compromising its effectiveness. Which actions should you take?

Choose 2 answers

- A. Decrease the number of parallel trials

- B. Decrease the range of floating-point values

- C. Set the early stopping parameter to TRUE

- D. Change the search algorithm from Bayesian search to random search.

- E. Decrease the maximum number of trials during subsequent training phases.

Answer: DE

NEW QUESTION 10

You have trained a model on a dataset that required computationally expensive preprocessing operations. You need to execute the same preprocessing at prediction time. You deployed the model on Al Platform for high-throughput online prediction. Which architecture should you use?

- A. • Validate the accuracy of the model that you trained on preprocessed data• Create a new model that uses the raw data and is available in real time• Deploy the new model onto Al Platform for online prediction

- B. • Send incoming prediction requests to a Pub/Sub topic• Transform the incoming data using a Dataflow job• Submit a prediction request to Al Platform using the transformed data• Write the predictions to an outbound Pub/Sub queue

- C. • Stream incoming prediction request data into Cloud Spanner• Create a view to abstract your preprocessing logic.• Query the view every second for new records• Submit a prediction request to Al Platform using the transformed data• Write the predictions to an outbound Pub/Sub queue.

- D. • Send incoming prediction requests to a Pub/Sub topic• Set up a Cloud Function that is triggered when messages are published to the Pub/Sub topic.• Implement your preprocessing logic in the Cloud Function• Submit a prediction request to Al Platform using the transformed data• Write the predictions to an outbound Pub/Sub queue

Answer: D

NEW QUESTION 11

You are training a TensorFlow model on a structured data set with 100 billion records stored in several CSV files. You need to improve the input/output execution performance. What should you do?

- A. Load the data into BigQuery and read the data from BigQuery.

- B. Load the data into Cloud Bigtable, and read the data from Bigtable

- C. Convert the CSV files into shards of TFRecords, and store the data in Cloud Storage

- D. Convert the CSV files into shards of TFRecords, and store the data in the Hadoop Distributed File System (HDFS)

Answer: B

NEW QUESTION 12

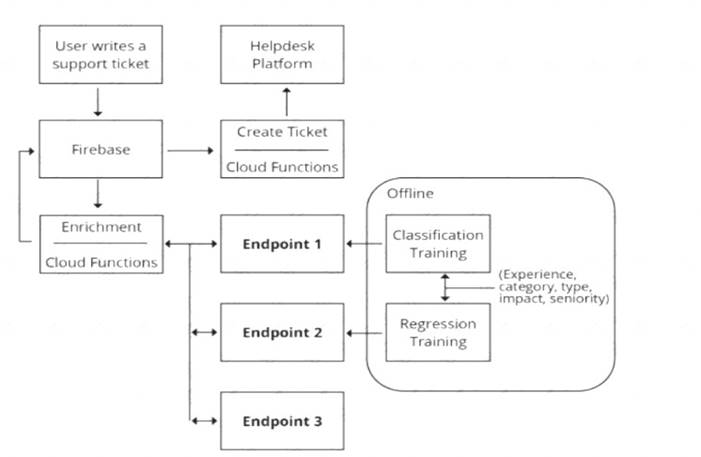

You are designing an architecture with a serveress ML system to enrich customer support tickets with informative metadata before they are routed to a support agent. You need a set of models to predict ticket priority, predict ticket resolution time, and perform sentiment analysis to help agents make strategic decisions when they process support requests. Tickets are not expected to have any domain-specific terms or jargon.

The proposed architecture has the following flow:

Which endpoints should the Enrichment Cloud Functions call?

- A. 1 = Al Platform, 2 = Al Platform, 3 = AutoML Vision

- B. 1 = Al Platform, 2 = Al Platform, 3 = AutoML Natural Language

- C. 1 = Al Platform, 2 = Al Platform, 3 = Cloud Natural Language API

- D. 1 = cloud Natural Language API, 2 = Al Platform, 3 = Cloud Vision API

Answer: B

NEW QUESTION 13

As the lead ML Engineer for your company, you are responsible for building ML models to digitize scanned customer forms. You have developed a TensorFlow model that converts the scanned images into text and stores them in Cloud Storage. You need to use your ML model on the aggregated data collected at the end of each day with minimal manual intervention. What should you do?

- A. Use the batch prediction functionality of Al Platform

- B. Create a serving pipeline in Compute Engine for prediction

- C. Use Cloud Functions for prediction each time a new data point is ingested

- D. Deploy the model on Al Platform and create a version of it for online inference.

Answer: D

NEW QUESTION 14

You have deployed multiple versions of an image classification model on Al Platform. You want to monitor the performance of the model versions overtime. How should you perform this comparison?

- A. Compare the loss performance for each model on a held-out dataset.

- B. Compare the loss performance for each model on the validation data

- C. Compare the receiver operating characteristic (ROC) curve for each model using the What-lf Tool

- D. Compare the mean average precision across the models using the Continuous Evaluation feature

Answer: B

NEW QUESTION 15

You are training a Resnet model on Al Platform using TPUs to visually categorize types of defects in automobile engines. You capture the training profile using the Cloud TPU profiler plugin and observe that it is highly input-bound. You want to reduce the bottleneck and speed up your model training process. Which modifications should you make to the tf .data dataset?

Choose 2 answers

- A. Use the interleave option for reading data

- B. Reduce the value of the repeat parameter

- C. Increase the buffer size for the shuffle option.

- D. Set the prefetch option equal to the training batch size

- E. Decrease the batch size argument in your transformation

Answer: AD

NEW QUESTION 16

You work for a toy manufacturer that has been experiencing a large increase in demand. You need to build an ML model to reduce the amount of time spent by quality control inspectors checking for product defects. Faster defect detection is a priority. The factory does not have reliable Wi-Fi. Your company wants to implement the new ML model as soon as possible. Which model should you use?

- A. AutoML Vision model

- B. AutoML Vision Edge mobile-versatile-1 model

- C. AutoML Vision Edge mobile-low-latency-1 model

- D. AutoML Vision Edge mobile-high-accuracy-1 model

Answer: A

NEW QUESTION 17

You manage a team of data scientists who use a cloud-based backend system to submit training jobs. This system has become very difficult to administer, and you want to use a managed service instead. The data scientists you work with use many different frameworks, including Keras, PyTorch, theano. Scikit-team, and custom libraries. What should you do?

- A. Use the Al Platform custom containers feature to receive training jobs using any framework

- B. Configure Kubeflow to run on Google Kubernetes Engine and receive training jobs through TFJob

- C. Create a library of VM images on Compute Engine; and publish these images on a centralized repository

- D. Set up Slurm workload manager to receive jobs that can be scheduled to run on your cloud infrastructure.

Answer: D

NEW QUESTION 18

......

P.S. Dumps-files.com now are offering 100% pass ensure Professional-Machine-Learning-Engineer dumps! All Professional-Machine-Learning-Engineer exam questions have been updated with correct answers: https://www.dumps-files.com/files/Professional-Machine-Learning-Engineer/ (60 New Questions)