2026 New CCA-505 Exam Dumps with PDF and VCE Free: https://www.2passeasy.com/dumps/CCA-505/

We provide which are the best for clearing CCA-505 test, and to get certified by Cloudera Cloudera Certified Administrator for Apache Hadoop (CCAH) CDH5 Upgrade Exam. The covers all the knowledge points of the real CCA-505 exam. Crack your Cloudera CCA-505 Exam with latest dumps, guaranteed!

Check CCA-505 free dumps before getting the full version:

NEW QUESTION 1

You are planning a Hadoop cluster and considering implementing 10 Gigabit Ethernet as the network fabric. Which workloads benefit the most from a faster network fabric?

- A. When your workload generates a large amount of output data, significantly larger than amount of intermediate data

- B. When your workload generates a large amount of intermediate data, on the order of the input data itself

- C. When workload consumers a large amount of input data, relative to the entire capacity of HDFS

- D. When your workload consists of processor-intensive tasks

Answer: B

NEW QUESTION 2

You are configuring a cluster running HDFS, MapReduce version 2 (MRv2) on YARN running Linux. How must you format the underlying filesystem of each DataNode?

- A. They must not formatted - - HDFS will format the filesystem automatically

- B. They may be formatted in any Linux filesystem

- C. They must be formatted as HDFS

- D. They must be formatted as either ext3 or ext4

Answer: D

NEW QUESTION 3

You have a cluster running with the Fair Scheduler enabled. There are currently no jobs running on the cluster, and you submit a job A, so that only job A is running on the cluster. A while later, you submit Job B. now job A and Job B are running on the cluster at the same time. How will the Fair Scheduler handle these two jobs?

- A. When job A gets submitted, it consumes all the tasks slots.

- B. When job A gets submitted, it doesn’t consume all the task slots

- C. When job B gets submitted, Job A has to finish first, before job B can scheduled

- D. When job B gets submitted, it will get assigned tasks, while Job A continue to run with fewer tasks.

Answer: C

NEW QUESTION 4

You are upgrading a Hadoop cluster from HDFS and MapReduce version 1 (MRv1) to one running HDFS and MapReduce version 2 (MRv2) on YARN. You want to set and enforce a block of 128MB for all new files written to the cluster after the upgrade. What should you do?

- A. Set dfs.block.size to 128M on all the worker nodes, on all client machines, and on the NameNode, and set the parameter to final.

- B. Set dfs.block.size to 134217728 on all the worker nodes, on all client machines, and on the NameNode, and set the parameter to final.

- C. Set dfs.block.size to 134217728 on all the worker nodes and client machines, and set the parameter to fina

- D. You do need to set this value on the NameNode.

- E. Set dfs.block.size to 128M on all the worker nodes and client machines, and set the parameter to fina

- F. You do need to set this value on the NameNode.

- G. You cannot enforce this, since client code can always override this value.

Answer: C

NEW QUESTION 5

On a cluster running MapReduce v2 (MRv2) on YARN, a MapReduce job is given a directory of 10 plain text as its input directory. Each file is made up of 3 HDFS blocks. How many Mappers will run?

- A. We cannot say; the number of Mappers is determined by the RsourceManager

- B. We cannot say; the number of Mappers is determined by the ApplicationManager

- C. We cannot say; the number of Mappers is determined by the developer

- D. 30

- E. 3

- F. 10

Answer: E

NEW QUESTION 6

Your cluster implements HDFS High Availability (HA). Your two NameNodes are named nn01 and nn02. What occurs when you execute the command: hdfs haadmin –failover nn01 nn02

- A. nn02 becomes the standby NameNode and nn01 becomes the active NameNode

- B. nn02 is fenced, and nn01 becomes the active NameNode

- C. nn01 becomes the standby NamNode and nn02 becomes the active NAmeNode

- D. nn01 is fenced, and nn02 becomes the active NameNode

Answer: D

Explanation: failover – initiate a failover between two NameNodes

This subcommand causes a failover from the first provided NameNode to the second. If the first NameNode is in the Standby state, this command simply transitions the second to the Active state without error. If the first NameNode is in the Active state, an attempt will be made to gracefully transition it to the Standby state. If this fails, the fencing methods (as configured by dfs.ha.fencing.methods) will be attempted in order until one of the methods succeeds. Only after this process will the second NameNode be transitioned to the Active state. If no fencing method succeeds, the second NameNode will not be transitioned to the Active state, and an error will be returned.

NEW QUESTION 7

Assume you have a file named foo.txt in your local directory. You issue the following three commands:

Hadoop fs –mkdir input

Hadoop fs –put foo.txt input/foo.txt

Hadoop fs –put foo.txt input

What happens when you issue that third command?

- A. The write succeeds, overwriting foo.txt in HDFS with no warning

- B. The write silently fails

- C. The file is uploaded and stored as a plain named input

- D. You get an error message telling you that input is not a directory

- E. You get a error message telling you that foo.txt already exist

- F. The file is not written to HDFS

- G. You get an error message telling you that foo.txt already exists, and asking you if you would like to overwrite

- H. You get a warning that foo.txt is being overwritten

Answer: E

NEW QUESTION 8

You are configuring your cluster to run HDFS and MapReduce v2 (MRv2) on YARN. Which daemons need to be installed on your clusters master nodes? (Choose Two)

- A. ResourceManager

- B. DataNode

- C. NameNode

- D. JobTracker

- E. TaskTracker

- F. HMaster

Answer: AC

NEW QUESTION 9

For each YARN Job, the Hadoop framework generates task log files. Where are Hadoop’s files stored?

- A. In HDFS, In the directory of the user who generates the job

- B. On the local disk of the slave node running the task

- C. Cached In the YARN container running the task, then copied into HDFS on fob completion

- D. Cached by the NodeManager managing the job containers, then written to a log directory on the NameNode

Answer: B

Explanation: Reference: http://hortonworks.com/blog/simplifying-user-logs-management-and-access-in- yarn/

NEW QUESTION 10

Your cluster is running MapReduce vserion 2 (MRv2) on YARN. Your ResourceManager is configured to use the FairScheduler. Now you want to configure your scheduler such that a new user on the cluster can submit jobs into their own queue application submission. Which configuration should you set?

- A. You can specify new queue name when user submits a job and new queue can be created dynamically if yarn.scheduler.fair.user-as-default-queue = false

- B. Yarn.scheduler.fair.user-as-default-queue = false and yarn.scheduler.fair.allow- undeclared-people = true

- C. You can specify new queue name per application in allocation.fair.allow-undeclared- people = true automatically assigned to the application queue

- D. You can specify new queue name when user submits a job and new queue can be created dynamically if the property yarn.scheduler.fair.allow-undecleared-pools = true

Answer: A

NEW QUESTION 11

In CDH4 and later, which file contains a serialized form of all the directory and files inodes in the filesystem, giving the NameNode a persistent checkpoint of the filesystem metadata?

- A. fstime

- B. VERSION

- C. Fsimage_N (Where N reflects all transactions up to transaction ID N)

- D. Edits_N-M (Where N-M specifies transactions between transactions ID N and transaction ID N)

Answer: C

Explanation: Reference: http://mikepluta.com/tag/namenode/

NEW QUESTION 12

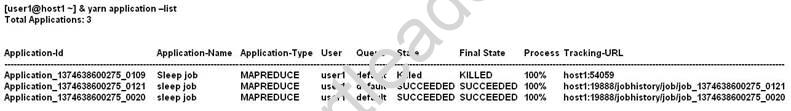

Given:

You want to clean up this list by removing jobs where the state is KILLED. What command you enter?

- A. Yarn application –kill application_1374638600275_0109

- B. Yarn rmadmin –refreshQueue

- C. Yarn application –refreshJobHistory

- D. Yarn rmadmin –kill application_1374638600275_0109

Answer: A

Explanation: Reference: http://docs.hortonworks.com/HDPDocuments/HDP2/HDP-2.1-latest/bk_using-apache-hadoop/content/common_mrv2_commands.html

NEW QUESTION 13

A slave node in your cluster has four 2TB hard drives installed (4 x 2TB). The DataNode is

configured to store HDFS blocks on the disks. You set the value of the dfs.datanode.du.reserved parameter to 100GB. How does this alter HDFS block storage?

- A. A maximum of 100 GB on each hard drive may be used to store HDFS blocks

- B. All hard drives may be used to store HDFS blocks as long as atleast 100 GB in total is available on the node

- C. 100 GB on each hard drive may not be used to store HDFS blocks

- D. 25 GB on each hard drive may not be used to store HDFS blocks

Answer: B

NEW QUESTION 14

You have a Hadoop cluster running HDFS, and a gateway machine external to the cluster from which clients submit jobs. What do you need to do in order to run on the cluster and

submit jobs from the command line of the gateway machine?

- A. Install the impslad daemon, statestored daemon, and catalogd daemon on each machine in the cluster and on the gateway node

- B. Install the impalad daemon on each machine in the cluster, the statestored daemon and catalogd daemon on one machine in the cluster, and the impala shell on your gateway machine

- C. Install the impalad daemon and the impala shell on your gateway machine, and the statestored daemon and catalog daemon on one of the nodes in the cluster

- D. Install the impalad daemon, the statestored daemon, the catalogd daemon, and the impala shell on your gateway machine

- E. Install the impalad daemon, statestored daemon, and catalogd daemon on each machine in the cluster, and the impala shell on your gateway machine

Answer: B

NEW QUESTION 15

Which is the default scheduler in YARN?

- A. Fair Scheduler

- B. FIFO Scheduler

- C. Capacity Scheduler

- D. YARN doesn’t configure a default schedule

- E. You must first assign a appropriate scheduler class in yarn-site.xml

Answer: C

Explanation: Reference: http://hadoop.apache.org/docs/r2.3.0/hadoop-yarn/hadoop-yarn-site/FairScheduler.html

NEW QUESTION 16

You suspect that your NameNode is incorrectly configured, and is swapping memory to disk. Which Linux commands help you to identify whether swapping is occurring? (Select 3)

- A. free

- B. df

- C. memcat

- D. top

- E. vmstat

- F. swapinfo

Answer: ADE

P.S. Easily pass CCA-505 Exam with 45 Q&As Simply pass Dumps & pdf Version, Welcome to Download the Newest Simply pass CCA-505 Dumps: https://www.simply-pass.com/Cloudera-exam/CCA-505-dumps.html (45 New Questions)