2026 New Professional-Data-Engineer Exam Dumps with PDF and VCE Free: https://www.2passeasy.com/dumps/Professional-Data-Engineer/

we provide Verified Google Professional-Data-Engineer exam engine which are the best for clearing Professional-Data-Engineer test, and to get certified by Google Google Professional Data Engineer Exam. The Professional-Data-Engineer Questions & Answers covers all the knowledge points of the real Professional-Data-Engineer exam. Crack your Google Professional-Data-Engineer Exam with latest dumps, guaranteed!

Free demo questions for Google Professional-Data-Engineer Exam Dumps Below:

NEW QUESTION 1

You create an important report for your large team in Google Data Studio 360. The report uses Google BigQuery as its data source. You notice that visualizations are not showing data that is less than 1 hour old. What should you do?

- A. Disable caching by editing the report settings.

- B. Disable caching in BigQuery by editing table details.

- C. Refresh your browser tab showing the visualizations.

- D. Clear your browser history for the past hour then reload the tab showing the virtualizations.

Answer: A

Explanation:

Reference https://support.google.com/datastudio/answer/7020039?hl=en

NEW QUESTION 2

You are responsible for writing your company’s ETL pipelines to run on an Apache Hadoop cluster. The pipeline will require some checkpointing and splitting pipelines. Which method should you use to write the pipelines?

- A. PigLatin using Pig

- B. HiveQL using Hive

- C. Java using MapReduce

- D. Python using MapReduce

Answer: D

NEW QUESTION 3

All Google Cloud Bigtable client requests go through a front-end server they are sent to a Cloud Bigtable node.

- A. before

- B. after

- C. only if

- D. once

Answer: A

Explanation:

In a Cloud Bigtable architecture all client requests go through a front-end server before they are sent to a Cloud Bigtable node.

The nodes are organized into a Cloud Bigtable cluster, which belongs to a Cloud Bigtable instance, which is a container for the cluster. Each node in the cluster handles a subset of the requests to the cluster.

When additional nodes are added to a cluster, you can increase the number of simultaneous requests that the cluster can handle, as well as the maximum throughput for the entire cluster.

Reference: https://cloud.google.com/bigtable/docs/overview

NEW QUESTION 4

You are working on a niche product in the image recognition domain. Your team has developed a model that is dominated by custom C++ TensorFlow ops your team has implemented. These ops are used inside your main training loop and are performing bulky matrix multiplications. It currently takes up to several days to train a model. You want to decrease this time significantly and keep the cost low by using an accelerator on Google Cloud. What should you do?

- A. Use Cloud TPUs without any additional adjustment to your code.

- B. Use Cloud TPUs after implementing GPU kernel support for your customs ops.

- C. Use Cloud GPUs after implementing GPU kernel support for your customs ops.

- D. Stay on CPUs, and increase the size of the cluster you’re training your model on.

Answer: B

NEW QUESTION 5

You are working on a sensitive project involving private user data. You have set up a project on Google Cloud Platform to house your work internally. An external consultant is going to assist with coding a complex transformation in a Google Cloud Dataflow pipeline for your project. How should you maintain users’ privacy?

- A. Grant the consultant the Viewer role on the project.

- B. Grant the consultant the Cloud Dataflow Developer role on the project.

- C. Create a service account and allow the consultant to log on with it.

- D. Create an anonymized sample of the data for the consultant to work with in a different project.

Answer: C

NEW QUESTION 6

You are creating a new pipeline in Google Cloud to stream IoT data from Cloud Pub/Sub through Cloud Dataflow to BigQuery. While previewing the data, you notice that roughly 2% of the data appears to be corrupt. You need to modify the Cloud Dataflow pipeline to filter out this corrupt data. What should you do?

- A. Add a SideInput that returns a Boolean if the element is corrupt.

- B. Add a ParDo transform in Cloud Dataflow to discard corrupt elements.

- C. Add a Partition transform in Cloud Dataflow to separate valid data from corrupt data.

- D. Add a GroupByKey transform in Cloud Dataflow to group all of the valid data together and discard the rest.

Answer: B

NEW QUESTION 7

You need to compose visualizations for operations teams with the following requirements: Which approach meets the requirements?

- A. Load the data into Google Sheets, use formulas to calculate a metric, and use filters/sorting to show only suboptimal links in a table.

- B. Load the data into Google BigQuery tables, write Google Apps Script that queries the data, calculates the metric, and shows only suboptimal rows in a table in Google Sheets.

- C. Load the data into Google Cloud Datastore tables, write a Google App Engine Application that queries all rows, applies a function to derive the metric, and then renders results in a table using the Google charts and visualization API.

- D. Load the data into Google BigQuery tables, write a Google Data Studio 360 report that connects to your data, calculates a metric, and then uses a filter expression to show only suboptimal rows in a table.

Answer: C

NEW QUESTION 8

The YARN ResourceManager and the HDFS NameNode interfaces are available on a Cloud Dataproc cluster .

- A. application node

- B. conditional node

- C. master node

- D. worker node

Answer: C

Explanation:

The YARN ResourceManager and the HDFS NameNode interfaces are available on a Cloud Dataproc cluster master node. The cluster master-host-name is the name of your Cloud Dataproc cluster followed by an -m suffix—for example, if your cluster is named "my-cluster", the master-host-name would be "my-cluster-m".

Reference: https://cloud.google.com/dataproc/docs/concepts/cluster-web-interfaces#interfaces

NEW QUESTION 9

Which software libraries are supported by Cloud Machine Learning Engine?

- A. Theano and TensorFlow

- B. Theano and Torch

- C. TensorFlow

- D. TensorFlow and Torch

Answer: C

Explanation:

Cloud ML Engine mainly does two things:

Enables you to train machine learning models at scale by running TensorFlow training applications in the cloud.

Hosts those trained models for you in the cloud so that you can use them to get predictions about new data.

Reference: https://cloud.google.com/ml-engine/docs/technical-overview#what_it_does

NEW QUESTION 10

You create a new report for your large team in Google Data Studio 360. The report uses Google BigQuery as its data source. It is company policy to ensure employees can view only the data associated with their region, so you create and populate a table for each region. You need to enforce the regional access policy to the data.

Which two actions should you take? (Choose two.)

- A. Ensure all the tables are included in global dataset.

- B. Ensure each table is included in a dataset for a region.

- C. Adjust the settings for each table to allow a related region-based security group view access.

- D. Adjust the settings for each view to allow a related region-based security group view access.

- E. Adjust the settings for each dataset to allow a related region-based security group view access.

Answer: BD

NEW QUESTION 11

You are designing a data processing pipeline. The pipeline must be able to scale automatically as load increases. Messages must be processed at least once, and must be ordered within windows of 1 hour. How should you design the solution?

- A. Use Apache Kafka for message ingestion and use Cloud Dataproc for streaming analysis.

- B. Use Apache Kafka for message ingestion and use Cloud Dataflow for streaming analysis.

- C. Use Cloud Pub/Sub for message ingestion and Cloud Dataproc for streaming analysis.

- D. Use Cloud Pub/Sub for message ingestion and Cloud Dataflow for streaming analysis.

Answer: C

NEW QUESTION 12

You are designing storage for two relational tables that are part of a 10-TB database on Google Cloud. You want to support transactions that scale horizontally. You also want to optimize data for range queries on nonkey columns. What should you do?

- A. Use Cloud SQL for storag

- B. Add secondary indexes to support query patterns.

- C. Use Cloud SQL for storag

- D. Use Cloud Dataflow to transform data to support query patterns.

- E. Use Cloud Spanner for storag

- F. Add secondary indexes to support query patterns.

- G. Use Cloud Spanner for storag

- H. Use Cloud Dataflow to transform data to support query patterns.

Answer: D

Explanation:

Reference: https://cloud.google.com/solutions/data-lifecycle-cloud-platform

NEW QUESTION 13

You are training a spam classifier. You notice that you are overfitting the training data. Which three actions can you take to resolve this problem? (Choose three.)

- A. Get more training examples

- B. Reduce the number of training examples

- C. Use a smaller set of features

- D. Use a larger set of features

- E. Increase the regularization parameters

- F. Decrease the regularization parameters

Answer: ADF

NEW QUESTION 14

MJTelco’s Google Cloud Dataflow pipeline is now ready to start receiving data from the 50,000 installations. You want to allow Cloud Dataflow to scale its compute power up as required. Which Cloud Dataflow pipeline configuration setting should you update?

- A. The zone

- B. The number of workers

- C. The disk size per worker

- D. The maximum number of workers

Answer: A

NEW QUESTION 15

You are building an application to share financial market data with consumers, who will receive data feeds. Data is collected from the markets in real time. Consumers will receive the data in the following ways: Real-time event stream

Real-time event stream ANSI SQL access to real-time stream and historical data

ANSI SQL access to real-time stream and historical data  Batch historical exports

Batch historical exports

Which solution should you use?

- A. Cloud Dataflow, Cloud SQL, Cloud Spanner

- B. Cloud Pub/Sub, Cloud Storage, BigQuery

- C. Cloud Dataproc, Cloud Dataflow, BigQuery

- D. Cloud Pub/Sub, Cloud Dataproc, Cloud SQL

Answer: A

NEW QUESTION 16

Your startup has never implemented a formal security policy. Currently, everyone in the company has access to the datasets stored in Google BigQuery. Teams have freedom to use the service as they see fit, and they have not documented their use cases. You have been asked to secure the data warehouse. You need to discover what everyone is doing. What should you do first?

- A. Use Google Stackdriver Audit Logs to review data access.

- B. Get the identity and access management IIAM) policy of each table

- C. Use Stackdriver Monitoring to see the usage of BigQuery query slots.

- D. Use the Google Cloud Billing API to see what account the warehouse is being billed to.

Answer: C

NEW QUESTION 17

You are developing an application on Google Cloud that will automatically generate subject labels for users’ blog posts. You are under competitive pressure to add this feature quickly, and you have no additional developer resources. No one on your team has experience with machine learning. What should you do?

- A. Call the Cloud Natural Language API from your applicatio

- B. Process the generated Entity Analysis as labels.

- C. Call the Cloud Natural Language API from your applicatio

- D. Process the generated Sentiment Analysis as labels.

- E. Build and train a text classification model using TensorFlo

- F. Deploy the model using Cloud Machine Learning Engin

- G. Call the model from your application and process the results as labels.

- H. Build and train a text classification model using TensorFlo

- I. Deploy the model using a KubernetesEngine cluste

- J. Call the model from your application and process the results as labels.

Answer: B

NEW QUESTION 18

Your company is loading comma-separated values (CSV) files into Google BigQuery. The data is fully imported successfully; however, the imported data is not matching byte-to-byte to the source file. What is the most likely cause of this problem?

- A. The CSV data loaded in BigQuery is not flagged as CSV.

- B. The CSV data has invalid rows that were skipped on import.

- C. The CSV data loaded in BigQuery is not using BigQuery’s default encoding.

- D. The CSV data has not gone through an ETL phase before loading into BigQuery.

Answer: B

NEW QUESTION 19

Which methods can be used to reduce the number of rows processed by BigQuery?

- A. Splitting tables into multiple tables; putting data in partitions

- B. Splitting tables into multiple tables; putting data in partitions; using the LIMIT clause

- C. Putting data in partitions; using the LIMIT clause

- D. Splitting tables into multiple tables; using the LIMIT clause

Answer: A

Explanation:

If you split a table into multiple tables (such as one table for each day), then you can limit your query to the data in specific tables (such as for particular days). A better method is to use a partitioned table, as long as your data can be separated by the day.

If you use the LIMIT clause, BigQuery will still process the entire table. Reference: https://cloud.google.com/bigquery/docs/partitioned-tables

NEW QUESTION 20

You are building a new application that you need to collect data from in a scalable way. Data arrives continuously from the application throughout the day, and you expect to generate approximately 150 GB of JSON data per day by the end of the year. Your requirements are: Decoupling producer from consumer

Decoupling producer from consumer Space and cost-efficient storage of the raw ingested data, which is to be stored indefinitely

Space and cost-efficient storage of the raw ingested data, which is to be stored indefinitely  Near real-time SQL query

Near real-time SQL query Maintain at least 2 years of historical data, which will be queried with SQ

Maintain at least 2 years of historical data, which will be queried with SQ

Which pipeline should you use to meet these requirements?

- A. Create an application that provides an AP

- B. Write a tool to poll the API and write data to Cloud Storage as gzipped JSON files.

- C. Create an application that writes to a Cloud SQL database to store the dat

- D. Set up periodic exports of the database to write to Cloud Storage and load into BigQuery.

- E. Create an application that publishes events to Cloud Pub/Sub, and create Spark jobs on Cloud Dataproc to convert the JSON data to Avro format, stored on HDFS on Persistent Disk.

- F. Create an application that publishes events to Cloud Pub/Sub, and create a Cloud Dataflow pipeline that transforms the JSON event payloads to Avro, writing the data to Cloud Storage and BigQuery.

Answer: A

NEW QUESTION 21

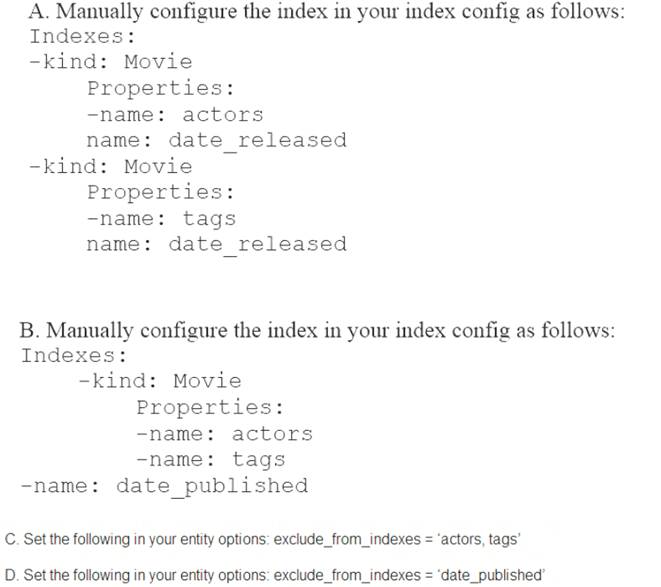

You are deploying a new storage system for your mobile application, which is a media streaming service. You decide the best fit is Google Cloud Datastore. You have entities with multiple properties, some of which can take on multiple values. For example, in the entity ‘Movie’ the property ‘actors’ and the property ‘tags’ have multiple values but the property ‘date released’ does not. A typical query would ask for all movies with actor=<actorname> ordered by date_released or all movies with tag=Comedy ordered by date_released. How should you avoid a combinatorial explosion in the number of indexes?

- A. Option A

- B. Option B.

- C. Option C

- D. Option D

Answer: A

NEW QUESTION 22

You have several Spark jobs that run on a Cloud Dataproc cluster on a schedule. Some of the jobs run in sequence, and some of the jobs run concurrently. You need to automate this process. What should you do?

- A. Create a Cloud Dataproc Workflow Template

- B. Create an initialization action to execute the jobs

- C. Create a Directed Acyclic Graph in Cloud Composer

- D. Create a Bash script that uses the Cloud SDK to create a cluster, execute jobs, and then tear down the cluster

Answer: A

NEW QUESTION 23

When you store data in Cloud Bigtable, what is the recommended minimum amount of stored data?

- A. 500 TB

- B. 1 GB

- C. 1 TB

- D. 500 GB

Answer: C

Explanation:

Cloud Bigtable is not a relational database. It does not support SQL queries, joins, or multi-row transactions. It is not a good solution for less than 1 TB of data.

Reference: https://cloud.google.com/bigtable/docs/overview#title_short_and_other_storage_options

NEW QUESTION 24

MJTelco is building a custom interface to share data. They have these requirements:  They need to do aggregations over their petabyte-scale datasets.

They need to do aggregations over their petabyte-scale datasets. They need to scan specific time range rows with a very fast response time (milliseconds). Which combination of Google Cloud Platform products should you recommend?

They need to scan specific time range rows with a very fast response time (milliseconds). Which combination of Google Cloud Platform products should you recommend?

- A. Cloud Datastore and Cloud Bigtable

- B. Cloud Bigtable and Cloud SQL

- C. BigQuery and Cloud Bigtable

- D. BigQuery and Cloud Storage

Answer: C

NEW QUESTION 25

......

Recommend!! Get the Full Professional-Data-Engineer dumps in VCE and PDF From Thedumpscentre.com, Welcome to Download: https://www.thedumpscentre.com/Professional-Data-Engineer-dumps/ (New 239 Q&As Version)