2026 New Professional-Data-Engineer Exam Dumps with PDF and VCE Free: https://www.2passeasy.com/dumps/Professional-Data-Engineer/

It is impossible to pass Google Professional-Data-Engineer exam without any help in the short term. Come to Ucertify soon and find the most advanced, correct and guaranteed Google Professional-Data-Engineer practice questions. You will get a surprising result by our Renovate Google Professional Data Engineer Exam practice guides.

Check Professional-Data-Engineer free dumps before getting the full version:

NEW QUESTION 1

Which Cloud Dataflow / Beam feature should you use to aggregate data in an unbounded data source every hour based on the time when the data entered the pipeline?

- A. An hourly watermark

- B. An event time trigger

- C. The with Allowed Lateness method

- D. A processing time trigger

Answer: D

Explanation:

When collecting and grouping data into windows, Beam uses triggers to determine when to emit the aggregated results of each window.

Processing time triggers. These triggers operate on the processing time – the time when the data element is processed at any given stage in the pipeline.

Event time triggers. These triggers operate on the event time, as indicated by the timestamp on each data

element. Beam’s default trigger is event time-based.

Reference: https://beam.apache.org/documentation/programming-guide/#triggers

NEW QUESTION 2

You have historical data covering the last three years in BigQuery and a data pipeline that delivers new data to BigQuery daily. You have noticed that when the Data Science team runs a query filtered on a date column and limited to 30–90 days of data, the query scans the entire table. You also noticed that your bill is increasing more quickly than you expected. You want to resolve the issue as cost-effectively as possible while maintaining the ability to conduct SQL queries. What should you do?

- A. Re-create the tables using DD

- B. Partition the tables by a column containing a TIMESTAMP or DATE Type.

- C. Recommend that the Data Science team export the table to a CSV file on Cloud Storage and use Cloud Datalab to explore the data by reading the files directly.

- D. Modify your pipeline to maintain the last 30–90 days of data in one table and the longer history in a different table to minimize full table scans over the entire history.

- E. Write an Apache Beam pipeline that creates a BigQuery table per da

- F. Recommend that the Data Science team use wildcards on the table name suffixes to select the data they need.

Answer: C

NEW QUESTION 3

You are operating a streaming Cloud Dataflow pipeline. Your engineers have a new version of the pipeline with a different windowing algorithm and triggering strategy. You want to update the running pipeline with the new version. You want to ensure that no data is lost during the update. What should you do?

- A. Update the Cloud Dataflow pipeline inflight by passing the --update option with the --jobName set to the existing job name

- B. Update the Cloud Dataflow pipeline inflight by passing the --update option with the --jobName set to a new unique job name

- C. Stop the Cloud Dataflow pipeline with the Cancel optio

- D. Create a new Cloud Dataflow job with the updated code

- E. Stop the Cloud Dataflow pipeline with the Drain optio

- F. Create a new Cloud Dataflow job with the updated code

Answer: A

NEW QUESTION 4

You operate an IoT pipeline built around Apache Kafka that normally receives around 5000 messages per second. You want to use Google Cloud Platform to create an alert as soon as the moving average over 1 hour drops below 4000 messages per second. What should you do?

- A. Consume the stream of data in Cloud Dataflow using Kafka I

- B. Set a sliding time window of 1 hour every 5 minute

- C. Compute the average when the window closes, and send an alert if the average is less than 4000 messages.

- D. Consume the stream of data in Cloud Dataflow using Kafka I

- E. Set a fixed time window of 1 hour.Compute the average when the window closes, and send an alert if the average is less than 4000 messages.

- F. Use Kafka Connect to link your Kafka message queue to Cloud Pub/Su

- G. Use a Cloud Dataflow template to write your messages from Cloud Pub/Sub to Cloud Bigtabl

- H. Use Cloud Scheduler to run a script every hour that counts the number of rows created in Cloud Bigtable in the last hou

- I. If that number falls below 4000, send an alert.

- J. Use Kafka Connect to link your Kafka message queue to Cloud Pub/Su

- K. Use a Cloud Dataflow template to write your messages from Cloud Pub/Sub to BigQuer

- L. Use Cloud Scheduler to run a script every five minutes that counts the number of rows created in BigQuery in the last hou

- M. If that number falls below 4000, send an alert.

Answer: C

NEW QUESTION 5

You have an Apache Kafka Cluster on-prem with topics containing web application logs. You need to replicate the data to Google Cloud for analysis in BigQuery and Cloud Storage. The preferred replication method is mirroring to avoid deployment of Kafka Connect plugins.

What should you do?

- A. Deploy a Kafka cluster on GCE VM Instance

- B. Configure your on-prem cluster to mirror your topics tothe cluster running in GC

- C. Use a Dataproc cluster or Dataflow job to read from Kafka and write to GCS.

- D. Deploy a Kafka cluster on GCE VM Instances with the PubSub Kafka connector configured as a Sink connecto

- E. Use a Dataproc cluster or Dataflow job to read from Kafka and write to GCS.

- F. Deploy the PubSub Kafka connector to your on-prem Kafka cluster and configure PubSub as a Source connecto

- G. Use a Dataflow job to read fron PubSub and write to GCS.

- H. Deploy the PubSub Kafka connector to your on-prem Kafka cluster and configure PubSub as a Sink connecto

- I. Use a Dataflow job to read fron PubSub and write to GCS.

Answer: A

NEW QUESTION 6

You’ve migrated a Hadoop job from an on-prem cluster to dataproc and GCS. Your Spark job is a complicated analytical workload that consists of many shuffing operations and initial data are parquet files (on average

200-400 MB size each). You see some degradation in performance after the migration to Dataproc, so you’d like to optimize for it. You need to keep in mind that your organization is very cost-sensitive, so you’d like to continue using Dataproc on preemptibles (with 2 non-preemptible workers only) for this workload.

What should you do?

- A. Increase the size of your parquet files to ensure them to be 1 GB minimum.

- B. Switch to TFRecords formats (app

- C. 200MB per file) instead of parquet files.

- D. Switch from HDDs to SSDs, copy initial data from GCS to HDFS, run the Spark job and copy results back to GCS.

- E. Switch from HDDs to SSDs, override the preemptible VMs configuration to increase the boot disk size.

Answer: C

NEW QUESTION 7

What are two of the characteristics of using online prediction rather than batch prediction?

- A. It is optimized to handle a high volume of data instances in a job and to run more complex models.

- B. Predictions are returned in the response message.

- C. Predictions are written to output files in a Cloud Storage location that you specify.

- D. It is optimized to minimize the latency of serving predictions.

Answer: BD

Explanation:

Online prediction

Optimized to minimize the latency of serving predictions. Predictions returned in the response message.

Batch prediction

Optimized to handle a high volume of instances in a job and to run more complex models. Predictions written to output files in a Cloud Storage location that you specify.

Reference:

https://cloud.google.com/ml-engine/docs/prediction-overview#online_prediction_versus_batch_prediction

NEW QUESTION 8

Your financial services company is moving to cloud technology and wants to store 50 TB of financial timeseries data in the cloud. This data is updated frequently and new data will be streaming in all the time. Your company also wants to move their existing Apache Hadoop jobs to the cloud to get insights into this data.

Which product should they use to store the data?

- A. Cloud Bigtable

- B. Google BigQuery

- C. Google Cloud Storage

- D. Google Cloud Datastore

Answer: A

Explanation:

Reference: https://cloud.google.com/bigtable/docs/schema-design-time-series

NEW QUESTION 9

After migrating ETL jobs to run on BigQuery, you need to verify that the output of the migrated jobs is the same as the output of the original. You’ve loaded a table containing the output of the original job and want to compare the contents with output from the migrated job to show that they are identical. The tables do not contain a primary key column that would enable you to join them together for comparison.

What should you do?

- A. Select random samples from the tables using the RAND() function and compare the samples.

- B. Select random samples from the tables using the HASH() function and compare the samples.

- C. Use a Dataproc cluster and the BigQuery Hadoop connector to read the data from each table and calculate a hash from non-timestamp columns of the table after sortin

- D. Compare the hashes of each table.

- E. Create stratified random samples using the OVER() function and compare equivalent samples from each table.

Answer: B

NEW QUESTION 10

For the best possible performance, what is the recommended zone for your Compute Engine instance and Cloud Bigtable instance?

- A. Have the Compute Engine instance in the furthest zone from the Cloud Bigtable instance.

- B. Have both the Compute Engine instance and the Cloud Bigtable instance to be in different zones.

- C. Have both the Compute Engine instance and the Cloud Bigtable instance to be in the same zone.

- D. Have the Cloud Bigtable instance to be in the same zone as all of the consumers of your data.

Answer: C

Explanation:

It is recommended to create your Compute Engine instance in the same zone as your Cloud Bigtable instance for the best possible performance,

If it's not possible to create a instance in the same zone, you should create your instance in another zone within the same region. For example, if your Cloud Bigtable instance is located in us-central1-b, you could create your instance in us-central1-f. This change may result in several milliseconds of additional latency for each Cloud Bigtable request.

It is recommended to avoid creating your Compute Engine instance in a different region from

your Cloud Bigtable instance, which can add hundreds of milliseconds of latency to each Cloud Bigtable request.

Reference: https://cloud.google.com/bigtable/docs/creating-compute-instance

NEW QUESTION 11

You are deploying MariaDB SQL databases on GCE VM Instances and need to configure monitoring and alerting. You want to collect metrics including network connections, disk IO and replication status from MariaDB with minimal development effort and use StackDriver for dashboards and alerts.

What should you do?

- A. Install the OpenCensus Agent and create a custom metric collection application with a StackDriver exporter.

- B. Place the MariaDB instances in an Instance Group with a Health Check.

- C. Install the StackDriver Logging Agent and configure fluentd in_tail plugin to read MariaDB logs.

- D. Install the StackDriver Agent and configure the MySQL plugin.

Answer: C

NEW QUESTION 12

Your company uses a proprietary system to send inventory data every 6 hours to a data ingestion service in the cloud. Transmitted data includes a payload of several fields and the timestamp of the transmission. If there are any concerns about a transmission, the system re-transmits the data. How should you deduplicate the data most efficiency?

- A. Assign global unique identifiers (GUID) to each data entry.

- B. Compute the hash value of each data entry, and compare it with all historical data.

- C. Store each data entry as the primary key in a separate database and apply an index.

- D. Maintain a database table to store the hash value and other metadata for each data entry.

Answer: D

NEW QUESTION 13

You work for a shipping company that uses handheld scanners to read shipping labels. Your company has strict data privacy standards that require scanners to only transmit recipients’ personally identifiable information (PII) to analytics systems, which violates user privacy rules. You want to quickly build a scalable solution using cloud-native managed services to prevent exposure of PII to the analytics systems. What should you do?

- A. Create an authorized view in BigQuery to restrict access to tables with sensitive data.

- B. Install a third-party data validation tool on Compute Engine virtual machines to check the incoming data for sensitive information.

- C. Use Stackdriver logging to analyze the data passed through the total pipeline to identify transactions that may contain sensitive information.

- D. Build a Cloud Function that reads the topics and makes a call to the Cloud Data Loss Prevention AP

- E. Use the tagging and confidence levels to either pass or quarantine the data in a bucket for review.

Answer: A

NEW QUESTION 14

You are selecting services to write and transform JSON messages from Cloud Pub/Sub to BigQuery for a data pipeline on Google Cloud. You want to minimize service costs. You also want to monitor and accommodate input data volume that will vary in size with minimal manual intervention. What should you do?

- A. Use Cloud Dataproc to run your transformation

- B. Monitor CPU utilization for the cluste

- C. Resize the number of worker nodes in your cluster via the command line.

- D. Use Cloud Dataproc to run your transformation

- E. Use the diagnose command to generate an operational output archiv

- F. Locate the bottleneck and adjust cluster resources.

- G. Use Cloud Dataflow to run your transformation

- H. Monitor the job system lag with Stackdrive

- I. Use the default autoscaling setting for worker instances.

- J. Use Cloud Dataflow to run your transformation

- K. Monitor the total execution time for a sampling of job

- L. Configure the job to use non-default Compute Engine machine types when needed.

Answer: B

NEW QUESTION 15

In order to securely transfer web traffic data from your computer's web browser to the Cloud Dataproc cluster you should use a(n) .

- A. VPN connection

- B. Special browser

- C. SSH tunnel

- D. FTP connection

Answer: C

Explanation:

To connect to the web interfaces, it is recommended to use an SSH tunnel to create a secure connection to the master node.

Reference:

https://cloud.google.com/dataproc/docs/concepts/cluster-web-interfaces#connecting_to_the_web_interfaces

NEW QUESTION 16

How would you query specific partitions in a BigQuery table?

- A. Use the DAY column in the WHERE clause

- B. Use the EXTRACT(DAY) clause

- C. Use the PARTITIONTIME pseudo-column in the WHERE clause

- D. Use DATE BETWEEN in the WHERE clause

Answer: C

Explanation:

Partitioned tables include a pseudo column named _PARTITIONTIME that contains a date-based timestamp for data loaded into the table. To limit a query to particular partitions (such as Jan 1st and 2nd of 2017), use a clause similar to this:

WHERE _PARTITIONTIME BETWEEN TIMESTAMP('2017-01-01') AND TIMESTAMP('2017-01-02')

Reference: https://cloud.google.com/bigquery/docs/partitioned-tables#the_partitiontime_pseudo_column

NEW QUESTION 17

You are developing a software application using Google's Dataflow SDK, and want to use conditional, for loops and other complex programming structures to create a branching pipeline. Which component will be used for the data processing operation?

- A. PCollection

- B. Transform

- C. Pipeline

- D. Sink API

Answer: B

Explanation:

In Google Cloud, the Dataflow SDK provides a transform component. It is responsible for the data processing operation. You can use conditional, for loops, and other complex programming structure to create a branching pipeline.

Reference: https://cloud.google.com/dataflow/model/programming-model

NEW QUESTION 18

You have enabled the free integration between Firebase Analytics and Google BigQuery. Firebase now automatically creates a new table daily in BigQuery in the format app_events_YYYYMMDD. You want to query all of the tables for the past 30 days in legacy SQL. What should you do?

- A. Use the TABLE_DATE_RANGE function

- B. Use the WHERE_PARTITIONTIME pseudo column

- C. Use WHERE date BETWEEN YYYY-MM-DD AND YYYY-MM-DD

- D. Use SELECT IF.(date >= YYYY-MM-DD AND date <= YYYY-MM-DD

Answer: A

Explanation:

Reference:

https://cloud.google.com/blog/products/gcp/using-bigquery-and-firebase-analytics-to-understandyour-mobile-ap

NEW QUESTION 19

Your company is running their first dynamic campaign, serving different offers by analyzing real-time data during the holiday season. The data scientists are collecting terabytes of data that rapidly grows every hour during their 30-day campaign. They are using Google Cloud Dataflow to preprocess the data and collect the feature (signals) data that is needed for the machine learning model in Google Cloud Bigtable. The team is observing suboptimal performance with reads and writes of their initial load of 10 TB of data. They want to improve this performance while minimizing cost. What should they do?

- A. Redefine the schema by evenly distributing reads and writes across the row space of the table.

- B. The performance issue should be resolved over time as the site of the BigDate cluster is increased.

- C. Redesign the schema to use a single row key to identify values that need to be updated frequently in the cluster.

- D. Redesign the schema to use row keys based on numeric IDs that increase sequentially per user viewing the offers.

Answer: A

NEW QUESTION 20

You want to use a BigQuery table as a data sink. In which writing mode(s) can you use BigQuery as a sink?

- A. Both batch and streaming

- B. BigQuery cannot be used as a sink

- C. Only batch

- D. Only streaming

Answer: A

Explanation:

When you apply a BigQueryIO.Write transform in batch mode to write to a single table, Dataflow invokes a BigQuery load job. When you apply a BigQueryIO.Write transform in streaming mode or in batch mode using a function to specify the destination table, Dataflow uses BigQuery's streaming inserts

Reference: https://cloud.google.com/dataflow/model/bigquery-io

NEW QUESTION 21

You have developed three data processing jobs. One executes a Cloud Dataflow pipeline that transforms data uploaded to Cloud Storage and writes results to BigQuery. The second ingests data from on-premises servers and uploads it to Cloud Storage. The third is a Cloud Dataflow pipeline that gets information from third-party data providers and uploads the information to Cloud Storage. You need to be able to schedule and monitor the execution of these three workflows and manually execute them when needed. What should you do?

- A. Create a Direct Acyclic Graph in Cloud Composer to schedule and monitor the jobs.

- B. Use Stackdriver Monitoring and set up an alert with a Webhook notification to trigger the jobs.

- C. Develop an App Engine application to schedule and request the status of the jobs using GCP API calls.

- D. Set up cron jobs in a Compute Engine instance to schedule and monitor the pipelines using GCP API calls.

Answer: D

NEW QUESTION 22

You work for a manufacturing plant that batches application log files together into a single log file once a day at 2:00 AM. You have written a Google Cloud Dataflow job to process that log file. You need to make sure the log file in processed once per day as inexpensively as possible. What should you do?

- A. Change the processing job to use Google Cloud Dataproc instead.

- B. Manually start the Cloud Dataflow job each morning when you get into the office.

- C. Create a cron job with Google App Engine Cron Service to run the Cloud Dataflow job.

- D. Configure the Cloud Dataflow job as a streaming job so that it processes the log data immediately.

Answer: C

NEW QUESTION 23

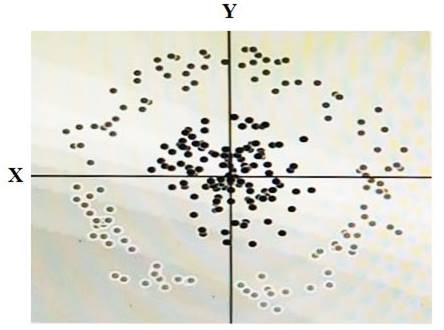

You have some data, which is shown in the graphic below. The two dimensions are X and Y, and the shade of each dot represents what class it is. You want to classify this data accurately using a linear algorithm.

To do this you need to add a synthetic feature. What should the value of that feature be?

- A. X^2+Y^2

- B. X^2

- C. Y^2

- D. cos(X)

Answer: D

NEW QUESTION 24

You have a data stored in BigQuery. The data in the BigQuery dataset must be highly available. You need to define a storage, backup, and recovery strategy of this data that minimizes cost. How should you configure the BigQuery table?

- A. Set the BigQuery dataset to be regiona

- B. In the event of an emergency, use a point-in-time snapshot to recover the data.

- C. Set the BigQuery dataset to be regiona

- D. Create a scheduled query to make copies of the data to tables suffixed with the time of the backu

- E. In the event of an emergency, use the backup copy of the table.

- F. Set the BigQuery dataset to be multi-regiona

- G. In the event of an emergency, use a point-in-time snapshot to recover the data.

- H. Set the BigQuery dataset to be multi-regiona

- I. Create a scheduled query to make copies of the data to tables suffixed with the time of the backu

- J. In the event of an emergency, use the backup copy of the table.

Answer: B

NEW QUESTION 25

......

Recommend!! Get the Full Professional-Data-Engineer dumps in VCE and PDF From Thedumpscentre.com, Welcome to Download: https://www.thedumpscentre.com/Professional-Data-Engineer-dumps/ (New 239 Q&As Version)